Criminal justice systems exist to protect public safety whilst respecting human rights and the presumption of innocence.

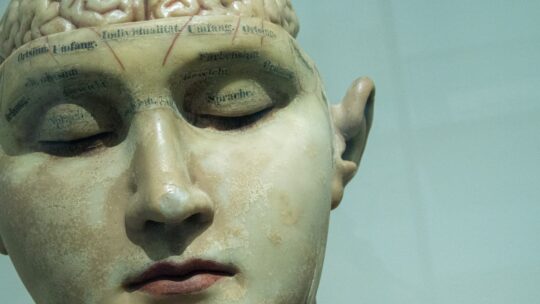

The narrative is compelling: AI systems that can detect your emotional state from facial expressions, vocal tone, body language, or physiological signals.

I can begin working on a problem at 10am and suddenly realise it's 10pm, that I haven't eaten, used the toilet, or checked my phone.

Prison suicide attempts are far more frequent, with thousands of self-harm incidents reported annually across the UK prison estate.

Insurance companies can price policies with greater accuracy by incorporating hundreds of data points rather than relying on crude demographic categories.

The numbers alone are remarkable: one trillion parameters, which would make it one of the largest language models ever trained.

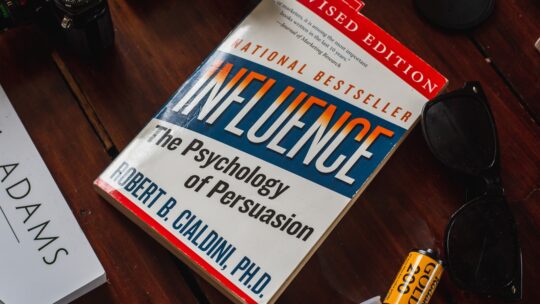

They require deliberate thinking, careful consideration of evidence, willingness to update your beliefs, and acceptance of uncertainty.

They're individuals with mental health problems, addiction issues, and trauma histories being warehoused rather than managed.

AI systems can ingest these datasets, identify patterns, and generate predictions that help us understand climate dynamics with greater precision.

Whether deployed by government or private companies, social scoring systems create perverse incentives and erode human autonomy.